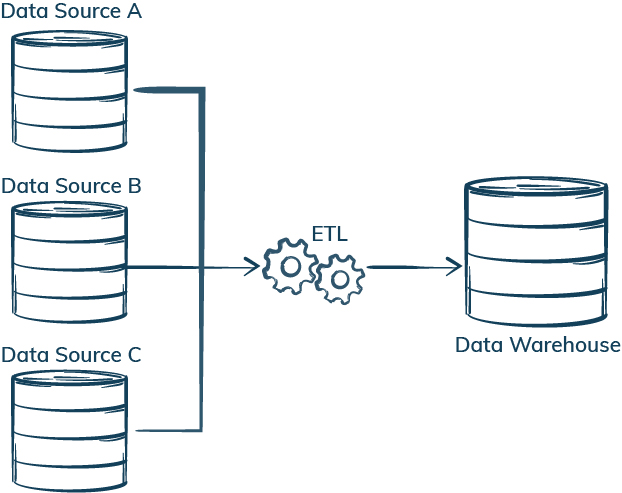

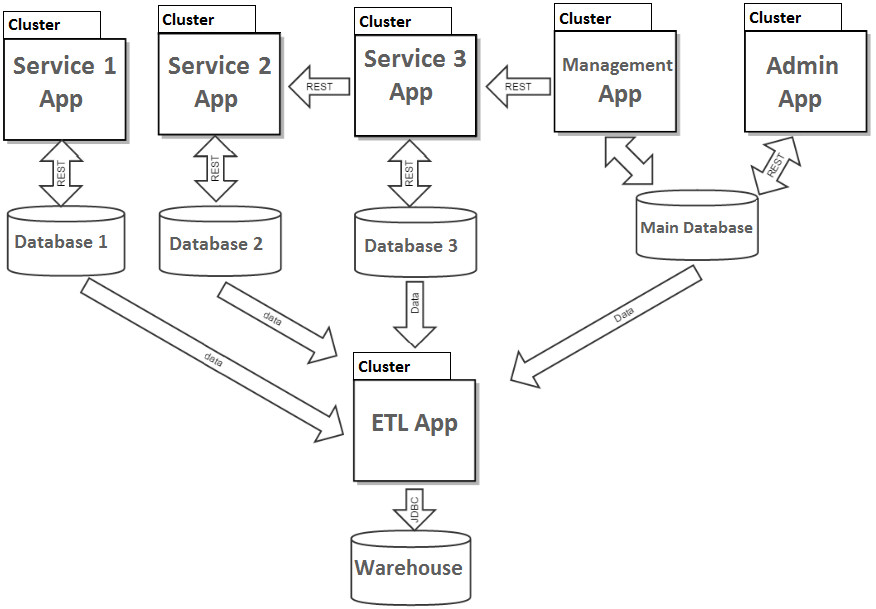

Teradata Data Validation – Validate large data volumes stored, processed and validated in Teradata systems. ETL tools automate the data migration process, and you can set them up to integrate data changes periodically or even at runtime.SAP Data Validation – Validate data used in business logic and reporting, and test data integration, export and import processes.WhereScape Test Automation – Automate test tasks for data warehouses created with WhereScape and perform health checks of the WhereScape model. As its name suggests, an ETL routine consists of three distinct steps, which often take place in parallel: data is extracted from one or more data sources it is converted into the required state it is loaded into the desired target, usually a data warehouse, mart, or database.Postal Address Validation – Geocoding address data and comparing it with a reliable source, such as a postal service database, to ensure its accuracy and existence.Because creating an enterprise ETL workflow from Start is difficult, you often rely on ETL workflow solutions like Hevo or Blendo to simplify and automate much of the process. Data is processed in batches from source databases to a data warehouse in a standard ETL pipeline. Microsoft SQL Server Integration Services (SSIS) is a platform for building high-performance data integration solutions, including extraction, transformation, and load (ETL) packages for data warehousing. DataOps Automation – BiG EVAL assists with automated testing and validation of data, enhancing efficiency and reliability in DataOps. Building an ETL Pipeline with Batch Processing.Raw data is extracted from different source systems and loaded into the data warehouse (DWH) during transformation. The traditional ETL process consists of 3 stages: extract, transform, load. The Extract, Transform, and Load process (ETL for short). Testing in an automated Data Warehouse Project – Maximize the automation-degree by automating test processes in generative data warehouse projects. ETL is an automated data optimization process that converts data into a digestible format for efficient analysis. From extracting, transforming and loading basics to architecture and transformation and automation.Data Validation and Quality Monitoring – Continuous validation and monitoring of enterprise data to ensure quality and guarantee error-free usage.The process has three stages extract, transform, and load. Data Warehouse and ETL/ELT Testing – Automate testing and data validation checks for your data warehouse, data vault and ETL/ELT processes. The ETL process is a process where you extract data from various data sources, transform it into a format suitable for loading into a data warehouse, and then load it.

Data Integration Testing – Seamless quality assurance for data integration processes in development and live environments.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed